Cheap, Quick, and Rigorous: Transforming Systematic Literature Reviews with AI and Python

Systematic Literature Reviews (SLRs) are widely recognized as the gold standard for evidence synthesis in academic research. By systematically identifying, screening, evaluating, and synthesizing prior studies, SLRs provide a rigorous foundation for theoretical development and empirical inquiry.

However, conducting an SLR is notoriously time-consuming, cognitively demanding, and methodologically complex.

Recent advances in Artificial Intelligence (AI), particularly in machine learning and natural language processing (NLP), are reshaping how researchers approach knowledge synthesis.

The Challenge of Traditional SLRs

A conventional SLR typically involves:

- Defining research questions

- Designing search strategies

- Screening titles and abstracts

- Conducting full-text eligibility assessment

- Extracting structured data

- Performing quality appraisal

- Synthesizing findings

When datasets involve hundreds or thousands of publications, manual screening becomes a major bottleneck.

AI has the potential to significantly reduce review time while maintaining methodological rigor — provided it is implemented responsibly.

How AI Transforms the SLR Workflow

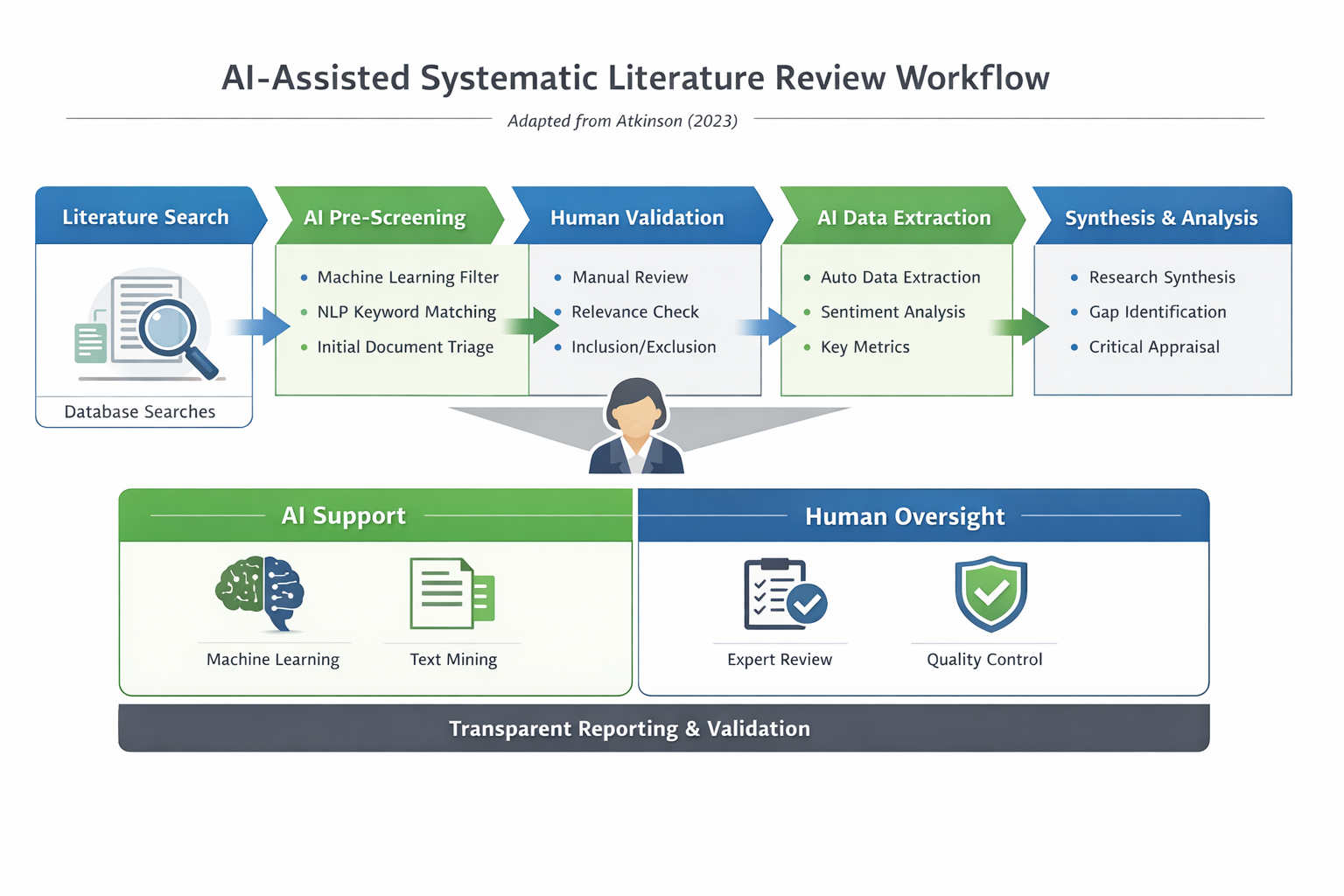

Figure 1. AI-Assisted Systematic Literature Review Framework.

AI does not replace researchers. Instead, it augments specific stages of the review process.

1️⃣ Automated Screening

Supervised machine learning and NLP models can classify abstracts according to predefined inclusion and exclusion criteria. This significantly reduces the manual burden of screening thousands of records while maintaining acceptable recall rates.

2️⃣ Structured Data Extraction

Text-mining pipelines can automatically extract:

- Research objectives

- Methodological approaches

- Sample characteristics

- Key findings

This improves coding consistency and reduces human fatigue during large-scale reviews.

3️⃣ Semantic Synthesis and Gap Detection

Large Language Models (LLMs) enable:

- Thematic clustering

- Trend detection

- Cross-study comparison

- Research gap identification

Such capabilities accelerate the transition from data collection to analytical insight.

Human-in-the-Loop: Preserving Rigor

Despite these advantages, AI-assisted SLRs require a human-in-the-loop approach.

Algorithmic bias, hallucinated outputs, and limited model transparency remain important methodological concerns. Therefore, AI should function as a decision-support system rather than an autonomous reviewer.

Maintaining transparency, validation checkpoints, and reproducibility protocols ensures academic rigor is preserved.

Practical Implementation with Python

Demonstration: AI-Assisted Screening in Practice

The dataset used in this demonstration originates from a bibliographic extraction conducted for my previously published study on Digital Knowledge Management in Project-Based Organizations.

For compliance reasons, the original dataset is not publicly shared. The example below illustrates the execution logic of the AI-assisted screening function using a sample metadata file.

Python Execution

import pandas as pd

def ai_assisted_screening(dataset_path, keywords):

df = pd.read_csv(dataset_path)

mask = df['Abstract'].str.contains('|'.join(keywords), case=False, na=False)

relevant_papers = df[mask]

print(f"Total relevant papers found: {len(relevant_papers)}")

return relevant_papers

# Example usage:

# keywords = ['Artificial Intelligence', 'Automation', 'NLP']

# relevant_df = ai_assisted_screening('sample_metadata.csv', keywords)

Dataset Reduction Impact

.png)

The AI-assisted keyword screening substantially reduced the dataset size, demonstrating how automated filtering can support efficient evidence triage in large bibliographic corpora.

In this demonstration, the dataset was reduced by approximately 88.44%, significantly narrowing the review scope while preserving topical relevance.

Such early-stage computational filtering enables researchers to allocate cognitive effort to higher-level analytical synthesis rather than mechanical exclusion tasks.

Stopword Filtering and Thematic Signal Extraction

.png)

After stopword removal and text normalization, thematic signal extraction reveals dominant research terms across the screened abstracts.

High-frequency non-informative words (e.g., the, and, of, to, in) were filtered to enhance interpretability and analytical clarity. The resulting keyword frequency distribution provides a cleaner representation of the intellectual structure within the dataset.

This step strengthens transparency and reproducibility by ensuring that visualized patterns reflect substantive research themes rather than linguistic artifacts.

From Automation to Augmentation

This methodological experimentation builds upon prior empirical work and reflects an ongoing exploration of AI-assisted research workflows.

Rather than replacing scholarly judgment, AI serves as a computational amplifier — accelerating screening, enhancing thematic clarity, and supporting reproducible analytical pipelines.

When implemented responsibly within a human-in-the-loop framework, AI transforms Systematic Literature Reviews from a purely manual endeavor into a scalable, data-informed, and methodologically transparent research process.

References & Acknowledgement

The phrase “Cheap, Quick, and Rigorous?” has been discussed in methodological debates on AI-assisted systematic reviews. See:

Atkinson, P. (2023). Cheap, Quick, and Rigorous? Artificial Intelligence and the Systematic Literature Review. This blog post reflects a practical and exploratory extension of that broader discussion.

Enjoy Reading This Article?

Here are some more articles you might like to read next: